One of our main values at CLICKTRUST is ‘Learn through opportunity’. For that reason, we try to attend several conferences each year. It is a bit more challenging this year, but SMX London still managed to amaze us with 2 days full of interesting talks and keynotes. Below you can find our main takeaways for SEO.

EAT: Identify what’s holding us back

SEO specialists these days often focus on content strategies and/or technical aspects of their website. Both Izzi Smith and Jessica James are convinced that SEOs should integrate Expertise, Authority and Trust (E-A-T) principles when doing this. This means that you need to have satisfying, well-researched content (Expertise) and show you can be seen as the leader in your industry (Authority) and that you’re a reliable source of information (Trust). It’s important that your online entity reflects these three aspects. Though it is more crucial for YMYL (Your Money or Your Life) content such as medical websites, financial institutions, online shops…, they both believe it is our duty as SEOs to incorporate these principles on all our websites. It may not be a ranking factor as such or something you can quickly fix, but these quality rater guidelines give us a good idea of what Google thinks is important for a good website. Pages with good E-A-T will naturally attract more backlinks as well.

In her talk, Jessica James takes us through all the topics she covers in her E-A-T audits, the first one of which is the Off-Site signals. In this step, you’re going to check online recommendations, online press and online mentions, but also third-party review sites, Wikipedia and social media. What are people saying about your brand? What is the overall sentiment? Are there things that people are complaining about and that are unclear in your communication? Are your social media accounts and websites cross-referencing to each other? In this phase, you want to do a quality check of your backlinks (backlink audit) too.

The second thing you need to check is the On-Site signals. Is it clear on your ‘About us’ page why your brand should be seen as the expert in your field? Do you mention or link to the actual experts? Do you include links to trusted resources? Do you mention your professional credits (if applicable)? What is the general sentiment of the on-site reviews and does it match with the off-site reviews?

On top of that, you need to think about Entity Recognition and Related Entities. Is the content written by actual experts (related entities)? Did you include their profiles, qualifications and are you making correct use of structured data? Does your brand have a knowledge panel that shows up for branded queries? What can you find about your business in Wikidata/Crunchbase? Can search engines recognize that your domain is part of the entity?

Furthermore, you want to avoid too many Ads & pop-ups and make sure you have quality Content. Is it clear what the purpose of the content is? Is the content relevant and ‘fresh’? Is it readable? What you need to avoid in any case are misleading content, clickbait titles and grammar mistakes.

Finally, you need to pay attention to Security as well. Make sure your website has an SSL certificate and HTTPS pages, the latest security protocols and the right security headers.

Optimized B2B Landing pages: topic clusters for B2B SEO

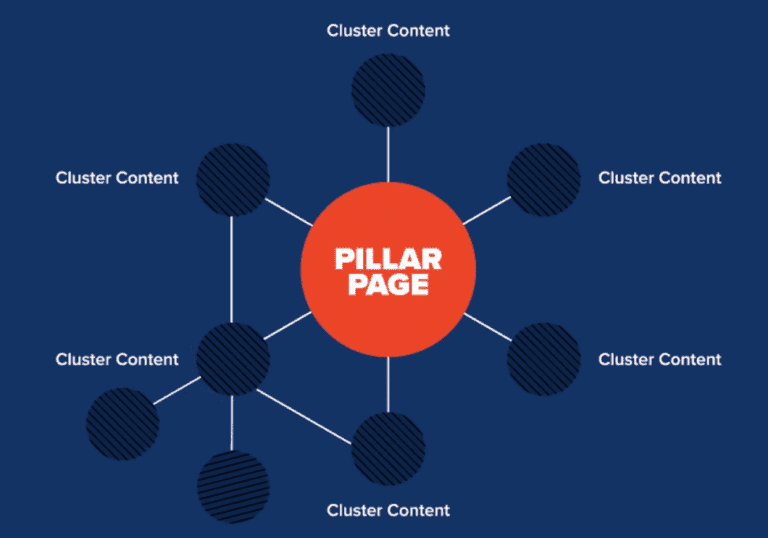

James Brockbank agrees that you need to be able to demonstrate your authority and expertise within your niche. The best way to this, according to him, is by focusing on topics rather than keywords and by creating topic clusters. Using this strategy forces you to think about how the content fits together, where each content piece fits in the customer journey and how it matches customer intent. It’s no longer enough to think about content strategy as standalone pieces.

The first thing you need to do is identify which topics you want to be known for and what your ideal customers are looking for. Each of these broad categories should get a pillar page around which you can build cluster pages.

The pillar pages should tell everything that a user wants to know about a specific topic, but should not contain too much detailed information yet. That’s what the cluster pages are for. You can see these pages as supporting subtopics that give a better understanding, answer questions and that target more long-tail keywords. There are various tools that can help you uncover searchers’ intent and the questions that they might have along their journey: AlsoAsked.com, Answerthepublic… Also, keep in mind that topic clusters evolve. Therefore, it is recommended to regularly review and update your content.

Finally, it’s crucial to have internal links from the pillar pages to all cluster pages and vice versa. Without it, topic clusters aren’t topic clusters. Ideally, you have cross-links between the different cluster pages as well. Once you have this in place, you can start building inbound links into these topic clusters.

Competitor research in a semantic-led world

Most competitor research consists of conducting keyword research, checking the SERPs, creating a list of competitors and checking their backlinks. According to Joe Williams, this way of doing competitor analysis is too limited as it only focuses on your direct competition. In a semantic-led world in which words can have different meanings depending on the context, it’s important to go broader and deeper to find new connections, missing content, and backlink opportunities. It can also give you ideas for the topic cluster pages mentioned above.

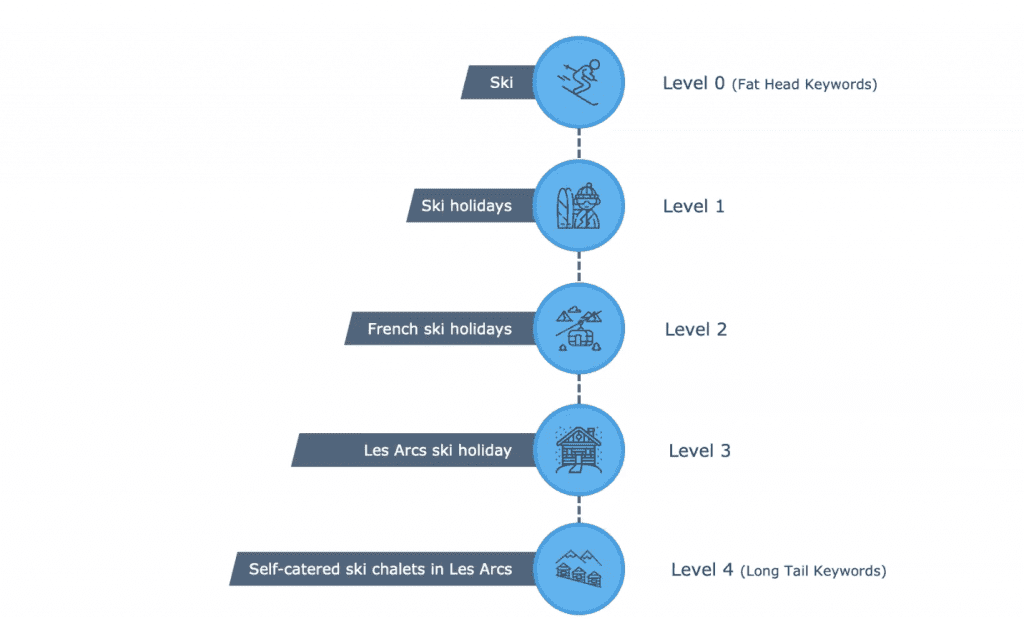

One technique he proposes is the 4-deep technique. The idea is that you start from a very broad keyword and go 4 levels deeper like in the illustration below. The deeper you go, the more specific the insights.

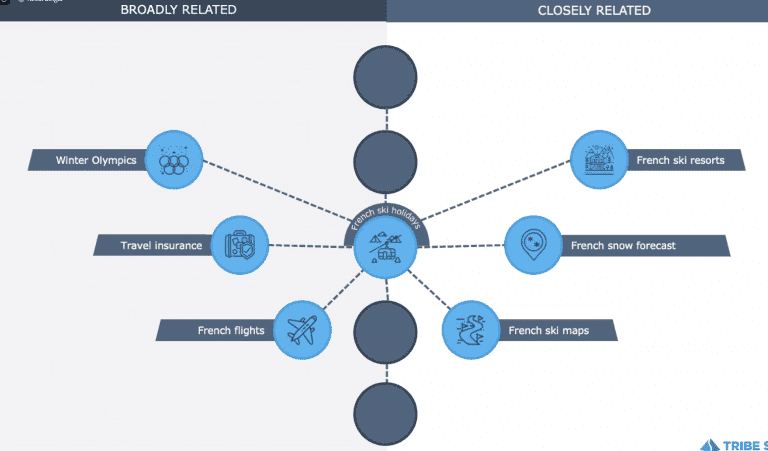

As shown in the illustration below, you should also look at broadly related and closely related keywords while still being relevant. By repeating this process, you will end up with a “knowledge graph” for your specific industry or sector, that can serve as a starting point for your content and backlink strategy.

A complementary technique is the broader backlinks strategy. The idea behind this technique is that you also look to would-be competitors across the borders. Though it mentions “backlinks” in the name, it can also be used to uncover relevant, missing content.

Leveraging schema and structured data for maximum effect

Why use schema markup?

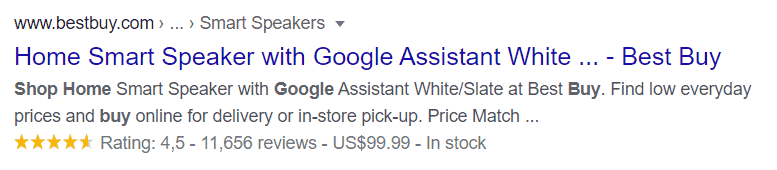

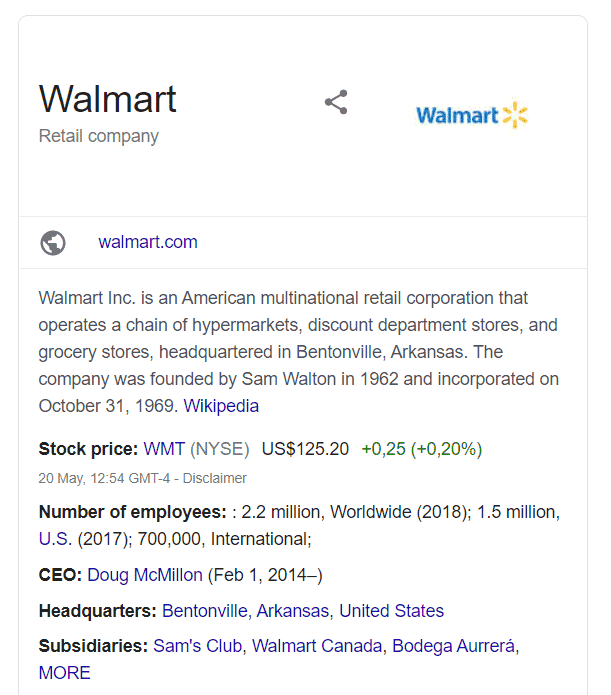

Google Search results are no longer the only thing to keep in mind as an SEO specialist: there are also Google images, Google Lens, Google Discover and Google Assistant. Therefore, schema markup is key as it helps search engines to understand your content and how it’s related to other content on the web. As you can see in the example below. schema.org can also be used the enrich your search results with rich snippets. This, in turn, has a positive impact on your CTR.

In order to really own your branded space in the SERPs, Mario La Malfa also recommends investing in your brand’s knowledge panel by having a consistent Google My Business, as well as Organization schema markup on your website.

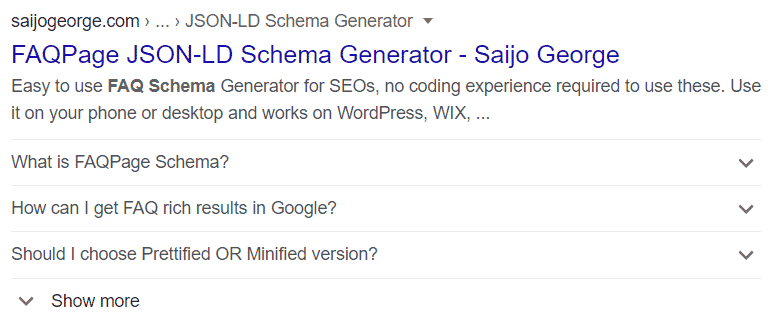

On top of that, you can use FAQ schema markup. This will both help relieve your support services, as well as increase coverage in the SERP.

A final piece of schema.org that is definitely worth implementing is HowTo. This will allow you to provide visual step-by-step guides and unlock the power of smart displays. Of course, new schema.org properties are added on a regular basis. Therefore, it’s important to keep an eye on the schema.org releases log, as well as the Google Search Gallery.

How to implement schema.org using Google Tag Manager?

An easy way to implement Product schema.org on a large scale is by using Google Tag Manager. However, you still need some basic understanding of JavaScript to do this.

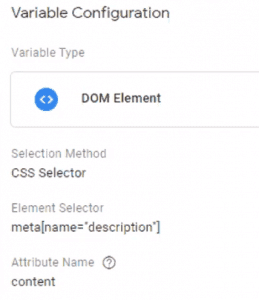

The first thing you have to do is to create custom variables. As you can see in the image below, you can assign a value to the variable (f.e. Meta description, h1, …) using a CSS selector. After that, you need a trigger in place for all the pages on which you want to implement the schema markup.

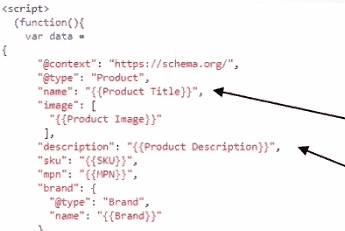

In the JSON-LD schema.org code, replace the value of the corresponding property with the correct custom variables you just created. Once done, you can create the tag using this code.

How can you test your schema.org implementation?

To see which schema markup you’re already using on your website, you can easily go to the source code of your website. However, it might be easier to use a tool like Screaming Frog. The structured data functionality is not enabled automatically, so you will need to update the spider configuration settings to include the extraction of structured data.

Before implementing new schema markup, it’s a good idea to test it using Google’s Structured Data testing tool or the Rich Results test. This will show you what Google can render on the page. Tools like SEOInfo even allow you to test your schema.org implementation whilst still in preview mode in Google Tag Manager.

Behind the scenes with Google Search Console and Bing Webmaster tools

Google’s response to COVID-19

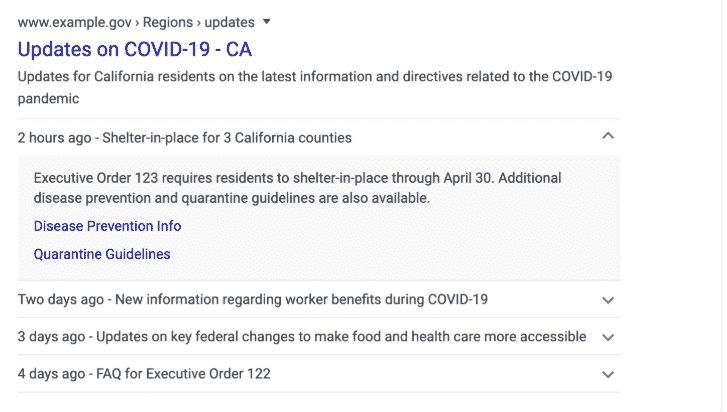

The last two months and a half have been very challenging for many companies and organizations. Customers, at their turn, might have a lot of questions about COVID-19 symptoms and guidelines, closed shops, canceled or postponed events… We already knew the Google Search crisis response, but Daniel Waisberg walked us through some additional COVID-19 resources for your sites.

The first one is the SpecialAnnouncement structured data, which you can use for announcements on the rescheduling of events, closure of your physical store, guidelines… There are two ways you can implement this on your website: either you implement the structured data on your website or you submit the announcement in Google Search Console. By doing this, you will also enable a new Special Announcement Enhancement report in Search Console, which will help you fix possible implementation issues.

(resource: developers.google.com)

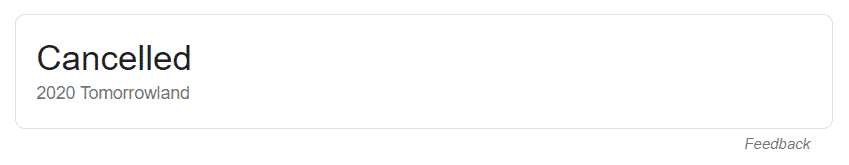

Google added new event properties for Event structured data as well: EventCancelled, EventPostponed, EventRescheduled or EventMovedOnline. Like allows you to inform your customers about the status of future events:

Finally, he also recommends updating your Location structured data to ‘Place’ or ‘VirtualLocation’, as well as changing your business hours in Google My Business.

Should you take down your website during lockdown?

We’ve seen several websites do this at the beginning of the Corona crisis, but Daniel stressed out once again that it is never a good idea to take your website offline entirely. Instead, he recommends using the Removals tool. This will temporarily remove your submitted URLs from the SERP for a maximum of 6 months, but won’t remove them from Google’s index. This tool has the advantage that it is really easy to roll back, minimizing the impact on ranking. This in contrast to other solutions such as redirecting all URLs to the homepage, noindexing all URLs, disallowing all URLs, … You shouldn’t use this for canonicalization purposes or to permanently remove a URL though.

The new Bing Webmaster tools

Fabrice Canel (Microsoft) announced the migration of the Submit URL, Block URL and Crawl Control features to the new Bing Webmaster portal. The latter allows you to set a maximum crawl capacity for Bingbot during peak hours on your website to avoid issues. It will also include a new backlinks tool that integrates an inbound links report with a disavow functionality, as well as a new sitemap report. We can expect to see the revamped Bing Webmaster tool in July.

Advanced GMB tactics for SEOs

Do you believe Google My Business is not important during lockdown because physical locations are closed anyway? Think again. It is true that people will no longer look for physical directions, but they still want to engage with your business. It’s important to stay active and raise awareness by being there with the information customers are looking for. According to Tim Capper and Claire Carlile, product listings and COVID-19 posts combined with product-related posts seem to be performing particularly well. But it is even more important to be relevant, timely and engaging.

Of course, you will want to automate this if you have several locations. Tools like AgencyAutomators Posts allow you to share the same news in different locations. This tool has a built-in UTM tagging functionality so that you can track the actual performance of your posts. However, UTM tagging is not only interesting for posts and can also be used for the website URL, appointment button, menu button, follow button, … in your GMB. The easiest way to do this is by using a UTM generator. It is a good idea to plan this out beforehand and to use consistent naming conventions to avoid messy data.

Once you have all of this in place, you can measure which posts or buttons perform best. Don’t focus on revenue and direct conversions only though, but also check the assisted (micro) conversions Google My Business is generating.

We definitely learned a lot about SEO and can’t wait to start applying these new insights for our clients. Thanks to all speakers and everyone for making this possible in these challenging times. Hopefully, we can go in person next year!

Interested in what we learned about PPC and automation? You can read all about it in this article: SMX London 2020 – Key Takeaways for PPC & Automation

Get our ramblings right in your inbox

We deepdive into hot topics across digital marketing and love to share.